TCP #122: Your approval process needs a classification model, not just a queue

Three-tier framework, change matrix, and checklist for what platform must own, review, or release.

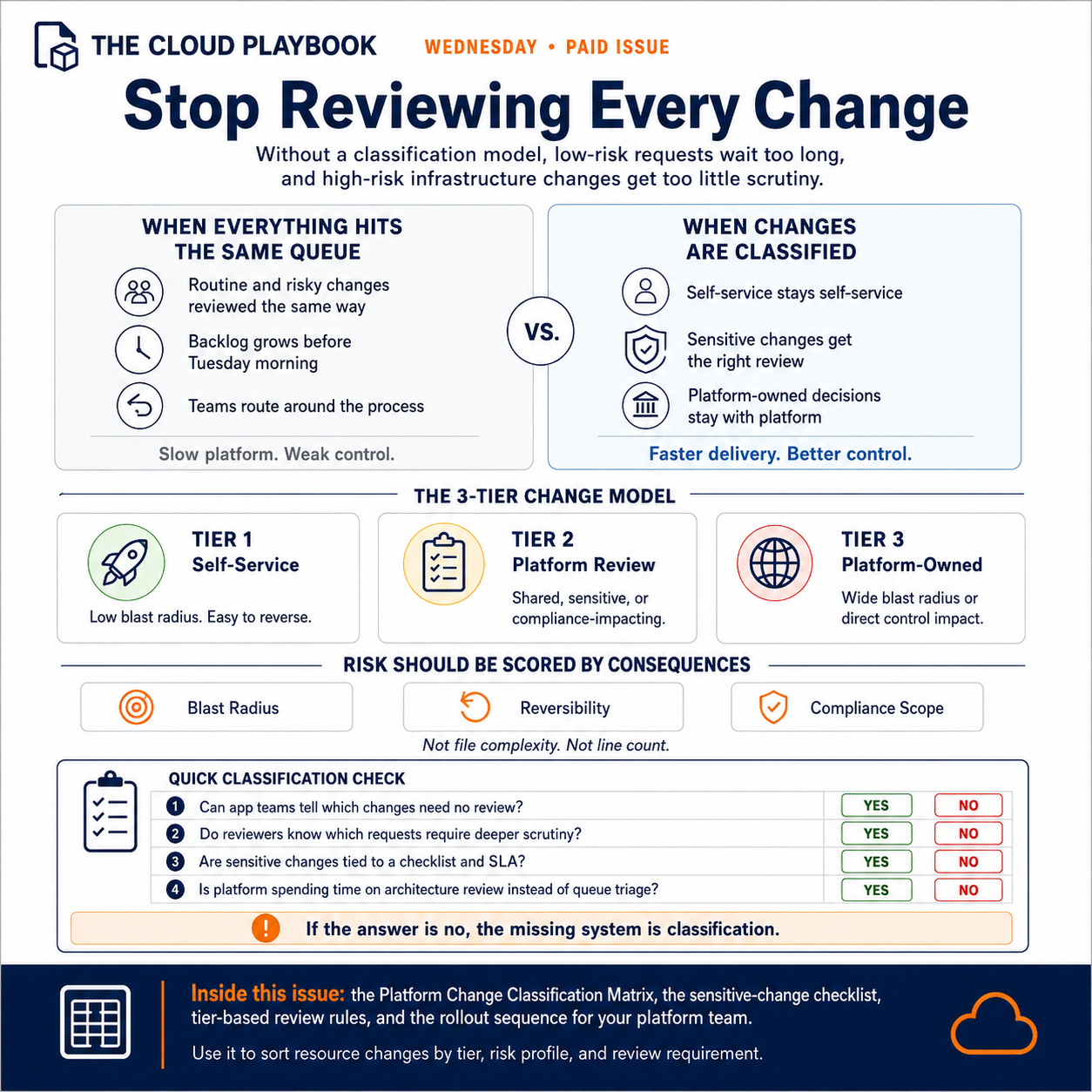

When platform teams grow their approval scope without a classification model, two things happen simultaneously.

App teams wait for approvals they do not actually need. And high-risk infrastructure changes move through the same queue as routine additions, reviewed at the same depth and with the same SLA.

The result is that the platform is slow, and the risks it was built to catch are still getting through.

Why Classification Determines Platform Scale

Every change without a classification model lands in the same queue. The platform engineer reviews it, asks the same baseline questions, and applies the same scrutiny regardless of actual risk.

This works for ten engineers. At forty, the queue is full before Tuesday morning. App teams submit on Monday and receive responses on Thursday. They learn to route around the process. They provision what they need outside the intake channel, outside tagging standards, outside the platform’s field of view.

The CTO sees slow delivery and a platform team that cannot explain its own backlog. The CFO sees an AWS bill with no clear attribution. The platform team answers both questions without controlling either outcome.

The root cause is not throughput. It is a classification. The platform is reviewing decisions that should never require review while inadequately scrutinizing those that genuinely do.

The Two Failures That Keep Teams Here

The first failure is binary thinking: every change either requires platform approval or it does not. Binary classification turns every edge case into a judgment call. Reviewers decide inconsistently. App teams learn which answer to expect and route requests accordingly. The approval process becomes a negotiation rather than a standard.

The second failure is calibrating risk on surface features rather than consequences. A complex Terraform file looks risky. A tag update looks trivial. But a missing cost allocation tag on a production RDS instance can result in months of misattributed spend. A well-validated Terraform module for a standard ECS service requires no review.

Risk lives in three variables: blast radius, reversibility, and compliance scope. Not in file complexity or line count. A classification model built on those three variables outperforms any heuristic based on surface appearance.

A Three-Tier Change Classification Model

Tier classification sorts every change into one of three buckets based on risk profile, not technical complexity.

Tier 1: Self-Service

Changes that fall within established standards affect only the requesting team’s scope and are easily reversible if incorrect. No platform review required. Examples:

Scaling compute within an approved instance family

Adding resources using approved Terraform modules with required cost allocation tags applied

Modifying application configuration for services already in scope

Updating routing rules within team-owned load balancers

App teams document Tier 1 changes in their own change log. Platform audits a sample monthly, not every instance.

Tier 2: Platform Review Required

Changes that introduce new patterns, cross team boundaries, affect shared infrastructure, or touch controls within compliance scope. Examples:

New VPC configurations or subnet additions

IAM roles with cross-account trust or elevated permissions

S3 bucket creation in production accounts

RDS instance provisioning above the defined size thresholds

Security group modifications affecting shared services

Any change to networking or compute that modifies a control in scope for SOC 2, FedRAMP, HIPAA, or ISO 27001

Platform reviews Tier 2 changes within a defined SLA: one business day for standard configurations, three business days for requests requiring compliance assessment. The sensitive-change checklist governs what reviewers verify before approving.

Tier 3: Platform-Owned Changes

Changes that must never leave the platform's hands. These are decisions with a wide blast radius, direct compliance control, ownership, or irreversible consequences if wrong. Examples:

Changes to account-level SCPs

Modifications to centralized logging or audit trail infrastructure

VPC peering, Transit Gateway, or Direct Connect configuration

Encryption key management and rotation policy

Organization-level IAM or identity federation configuration

Backup and disaster recovery configuration for shared infrastructure

App teams do not submit Tier 3 items as requests. They describe the business need. Platform engineers own the implementation.

The key question for classifying any change between Tier 1 and Tier 2: if this configuration is wrong, how long will it take for someone to detect the impact, and how hard will it be to reverse? That question drives tier placement more reliably than any checklist.

Building the Classification System at Your Platform

Follow this sequence to implement change classification:

1. List your accountability scope explicitly. What does your platform team actually own? Reliability SLAs, cloud cost reporting, compliance posture, networking, identity, observability? Write it as a named list. This list becomes the anchor for the entire model.

2. Map resource types to accountability areas. For each area, identify which AWS resource types and configuration choices directly affect the outcome. Cost accountability maps to compute sizing, storage provisioning, and cost allocation tagging. Compliance scope maps to encryption settings, access control, logging destinations, and network exposure.

3. Score each resource type for blast radius and reversibility. Blast radius: if this resource is misconfigured, what breaks? Reversibility: if the error is caught after deployment, how quickly can it be corrected without impacting production? High blast radius plus low reversibility equals Tier 3. Low blast radius plus high reversibility equals Tier 1.

4. Define the self-service boundary explicitly. Publish the list of resources and configurations that app teams can provision without review. Be specific. “EC2 instances using approved AMIs within approved instance families, tagged with required cost allocation tags, deployed via approved Terraform modules, into pre-approved subnets” is a self-service definition. “Standard EC2 instances” is not.

5. Build the sensitive-change checklist. For each Tier 2 resource category, define the specific items a reviewer verifies before approving. The checklist for IAM role creation differs from the checklist for RDS provisioning. Keep each checklist under 10 items. More than that signals the category needs further decomposition.

6. Name the escalation path. When a reviewer is uncertain about tier placement for an ambiguous request, who makes the final call? Name that person and document the process before the first edge case arrives.

Artifact in This Issue

The artifact is the Platform Change Classification Matrix: a structured reference table for categorizing infrastructure changes by tier, risk profile, and review requirements.

The matrix contains:

Resource type or change category (IAM role creation, S3 bucket provisioning, SCP modification, and 20 additional common resource types)

Risk indicators for each: blast radius score, reversibility score, compliance scope flag, shared infrastructure flag

Tier assignment: Self-Service, Platform Review, or Platform-Owned

Review the owner and review the SLA for every Tier 2 entry

Sensitive-change checklist for each Tier 2 category, listing the specific items a reviewer verifies before approving

The matrix is structured as a flat reference table. Sort by tier to give reviewers a quick-reference view. Sort by resource type to give app teams a self-service lookup.

Use this matrix as the starting point for your next platform ops review session. Walk through the resource types in your environment, assign each to a tier, and resolve disagreements using the blast radius and reversibility scoring. Publish the completed version in your internal wiki and reference it as the first step in your intake form before any request enters the queue.

What to Measure and When to Review

Classification distribution. Track the percentage of intake requests landing in each tier each week. A healthy distribution: 60 to 70 percent Tier 1, 25 to 35 percent Tier 2, 5 to 10 percent Tier 3. If Tier 1 is below 50 percent, the self-service boundary is too narrow. If Tier 2 exceeds 50 percent, audit whether reviewers are escalating ambiguous requests rather than approving or rejecting them.

Review cycle time for Tier 2. Mean time from submission to approval decision. Set a target SLA and track it weekly. If cycle time consistently exceeds the SLA, investigate whether the sensitive-change checklist is calibrated correctly or whether submitters are arriving with incomplete context.

Review the matrix quarterly. As your approved Terraform module library grows and app teams become familiar with standards, Tier 2 items should migrate to Tier 1. The model should become less restrictive over time as platform maturity increases.

What Changes When Classification Is Right

When the classification model is working, the platform team is no longer the one slowing delivery. App teams run self-service for their own decisions. Reviewers focus on the changes that actually need review. Platform-owned decisions stay in the platform's hands.

Platform engineers stop triaging an undifferentiated backlog. They start doing architecture review work worth doing.

The compliance evidence improves. Tier 3 changes leave a clean ownership trail. Tier 2 approvals are documented against a checklist. Tier 1 changes are auditable via sampling rather than exhaustive review.

The platform team builds a reputation for predictable, fast responses. That reputation is more durable than any SLA commitment made without a supporting model.

Use this matrix as the agenda for your next platform ops session.

Walk your team through the resource types in your environment and assign tiers. Share the completed matrix with the EMs and senior engineers who submit intake requests.

Run a calibration pass after the first 30 days: sort the previous month’s requests by tier and verify the classifications held.

If you manage a platform for multiple product teams, forward this to the EM or senior engineer who will be the primary intake submitter on each team.

That’s it for today!

Did you enjoy this newsletter issue?

Share with your friends, colleagues, and your favorite social media platform.

Until next week — Amrut

Get in touch

You can find me on LinkedIn or X.

If you would like to request a topic to read, please feel free to contact me directly via LinkedIn or X.